Navigation by guesswork

Before digital maps became standard, navigation relied heavily on improvisation. In a move of ultimate lo-fi ingenuity, operators would place a paper map over a radar screen and track the moving dot beneath it, estimating position based on visible landmarks such as rivers, forests, or roads. This approach worked to some degree of success but fell short when true precision mattered. Radar could provide horizontal positioning, but it offered low-accuracy insight into altitude and no warning if the aircraft was banking too aggressively or gradually losing altitude. By the time a deviation became visible, the opportunity to correct it had already passed. This was great for learning, but not so great for real-time operational maneuvering.

As mathematical methods were introduced, aviation began to shift from guesswork to prediction. Knowing an aircraft’s speed and direction made it possible to estimate its position and anticipate its next directional point. Embedded algorithms allowed aircraft to reach predefined points along their flight paths, even though real-time control remained limited. Because of this change, flight could be guided by expectation rather than reaction, the latter of which is less than reliable.

{{How UAVs learned 1="/custom-photos-elem"}}

Command without control

With the introduction of radio control, things changed dramatically. For the first time, operators could command a flight path from the ground, which created a slightly exaggerated sense of accomplishment around the topic. In reality, control without awareness remained rather fragile. An aircraft that follows commands without understanding its altitude, speed, or trajectory is still operating on the edge of failure. If, for example, an aircraft was instructed to roll left, it would continue rolling, whether that command netted a safe maneuver or a catastrophic failure. Early UAV operation demanded constant attention because the system had no instinct for self-preservation and no ability to correct its own behavior. So while there was in fact greater control over flight paths, overall safety and mission success had yet to be properly addressed.

At that time, data from the aircraft was extremely limited. Known in the UAV space as C1 (command-only), in practice, there were only two options for communication between the operator and the aircraft: Radar, which effectively acted as a ground control station, or direct visual observation through a camera feed.

An example of such command-only communication was the operation of the first lunar rover on the Moon. A special crew of five people operated the rover using control panels. The crew received images on monitors from the onboard cameras. Both panoramic images and low-frame-rate television images were transmitted. Due to the distance between the Earth and Moon, this signal was delayed, and the crew had to make decisions based on intermittently updated frames.

{{How UAVs learned 2="/custom-photos-elem"}}

Stability before intelligence

The first meaningful advancement in the area of autopilot came from stability rather than autonomy. Gyroscopes specifically gave engineers a reliable way to measure orientation and prevent dangerous deviations in flight.

By resisting orientation changes, not unlike how a spinning top maintains balance, gyroscopes allowed aircraft to hold safer angles, maintain altitude, and stabilize their heading. Similar principles later appeared in commercial aviation, where early autopilot systems could maintain a steady course, albeit still lacking an awareness of external conditions.

At this point, telemetry began to emerge, enabling the transmission of sensor data from onboard systems. For the first time, control was no longer about sending commands; it included receiving continuous feedback and data from the aircraft. Command and control (C2) communication made the command-and-control loop closer to real time, combining the relay of commands to the aircraft (fly, land, change direction) with the reception of telemetry from the aircraft itself (position, speed, battery, status). The onboard controller would function as a stabilizing layer rather than a decision-making system, reducing the likelihood of catastrophic errors but not yet enabling the aircraft to interpret or meaningfully respond to its environment.

{{How UAVs learned 3="/custom-photos-elem"}}

Small hardware, big shift

The next major evolutionary step was driven by further advancement in hardware rather than applied theory. For a long time, inertial systems were too large and expensive for widespread use, certainly for in-craft applications. In the 1990s, MEMS (micro-electromechanical systems) sensors moved from laboratory research development into real-world applications, offering chip-scale measurement of motion and acceleration while gradually reducing reliance on bulky mechanical gyroscopes. Size and scale meant that what was once a hypothetical north star took on a practical, in-craft application. This made modern flight controllers viable and widespread. Stabilization no longer required bulky equipment, and UAV systems became easier to deploy, scale, and integrate into real-world applications.

It should be noted that at this time, automation alone was not sufficient to achieve what we understand today as autopilot. While it could execute predefined instructions, a mature autopilot system also needed to analyze conditions, stabilize the aircraft, adapt to changes, and continue operating within safe parameters even when external inputs became unreliable. Autonomous decision-making still remained limited, and in most cases, the only truly autonomous maneuvers were the ability to return to the takeoff point in the event of radio link loss. Even this, however, depended on the availability of GNSS sensors.

The addition of GNSS further broadened capabilities by providing reliable positioning. Aircraft could now hold position, follow predefined routes, and return to their starting point without continuous manual input. This marked a real inflection point when unmanned aviation began to shift from experimental to operational.

{{How UAVs learned 4="/custom-photos-elem"}}

Evolution of remote piloting

While all that was happening, the introduction of digital video cameras led to the emergence of FPV (first-person view) UAVs. This advancement provided spatial awareness relative to surrounding objects and created a true sense of flight. Operators could see where the aircraft was going, strengthening their sense of flight control. The flipside is that it also made failures more immediate, as losing an image often meant the aircraft had suffered a collision or other catastrophic failure.

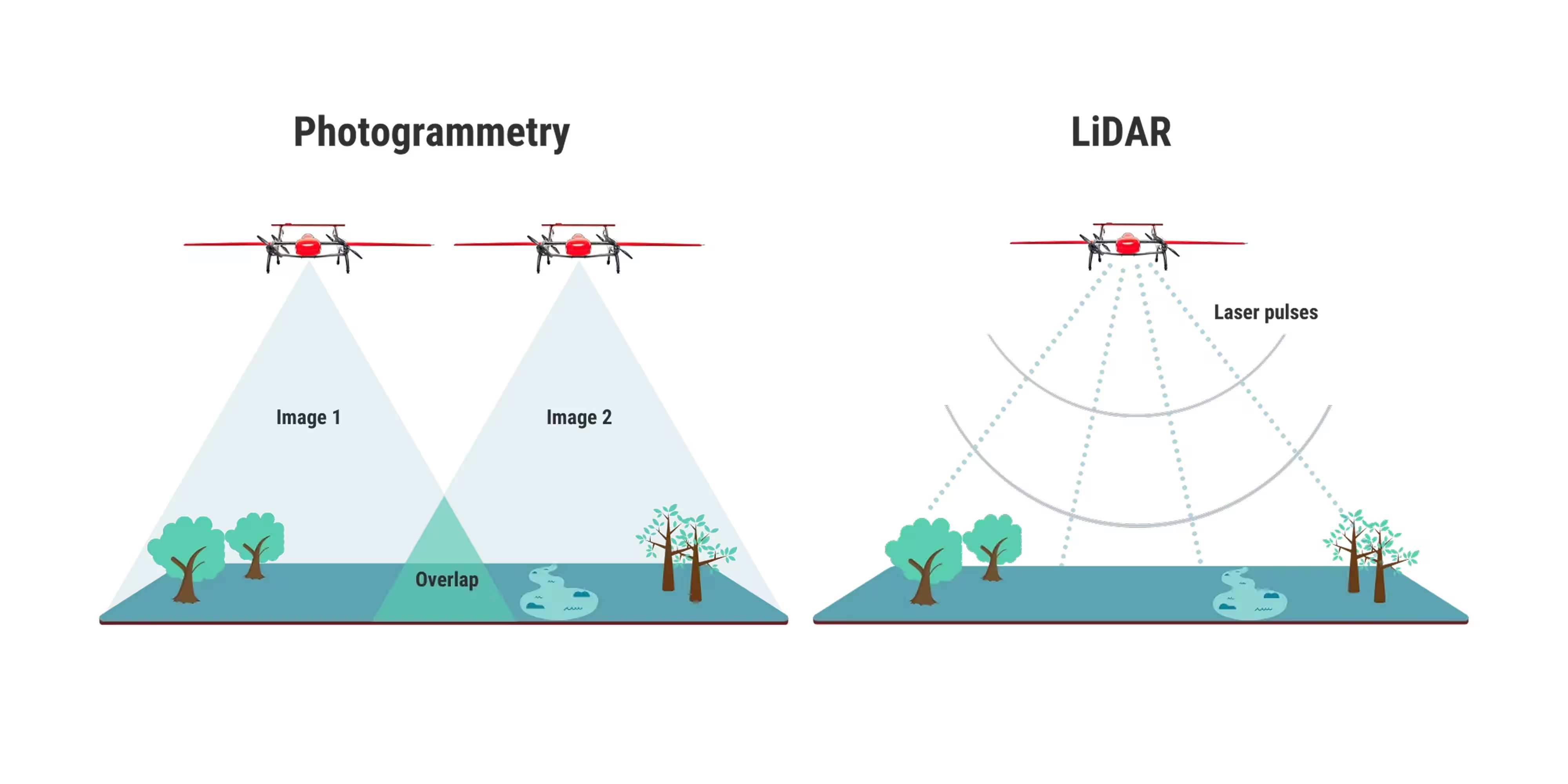

With flight controllers rapidly evolving, it became possible to create preplanned missions on the ground. While ground control systems initially acted as remote pilots, issuing commands, the ground control station eventually evolved into a system responsible for both issuing commands and receiving data from the aircraft, thus finally enabling mission control.

The illusion of autonomy

As systems improved, many appeared autonomous but, in actual fact, fell short of this goal. Flight paths were still planned on the ground, translated into command sequences, and executed in the air as long as the communication link remained intact. Reliable operation also depended heavily on terrain data and careful mission planning. When the radio link failed, these limitations became painfully obvious. In true fact, the only real autonomous capability of that era was the ability to return to a takeoff position in the event of radio link loss.

This distinction matters in practice because a system that only follows commands cannot sustain a mission under uncertainty. True autopilot requires enough internal awareness to maintain viable behavior even when the original plan no longer fully applies.

The pilot becomes the bottleneck

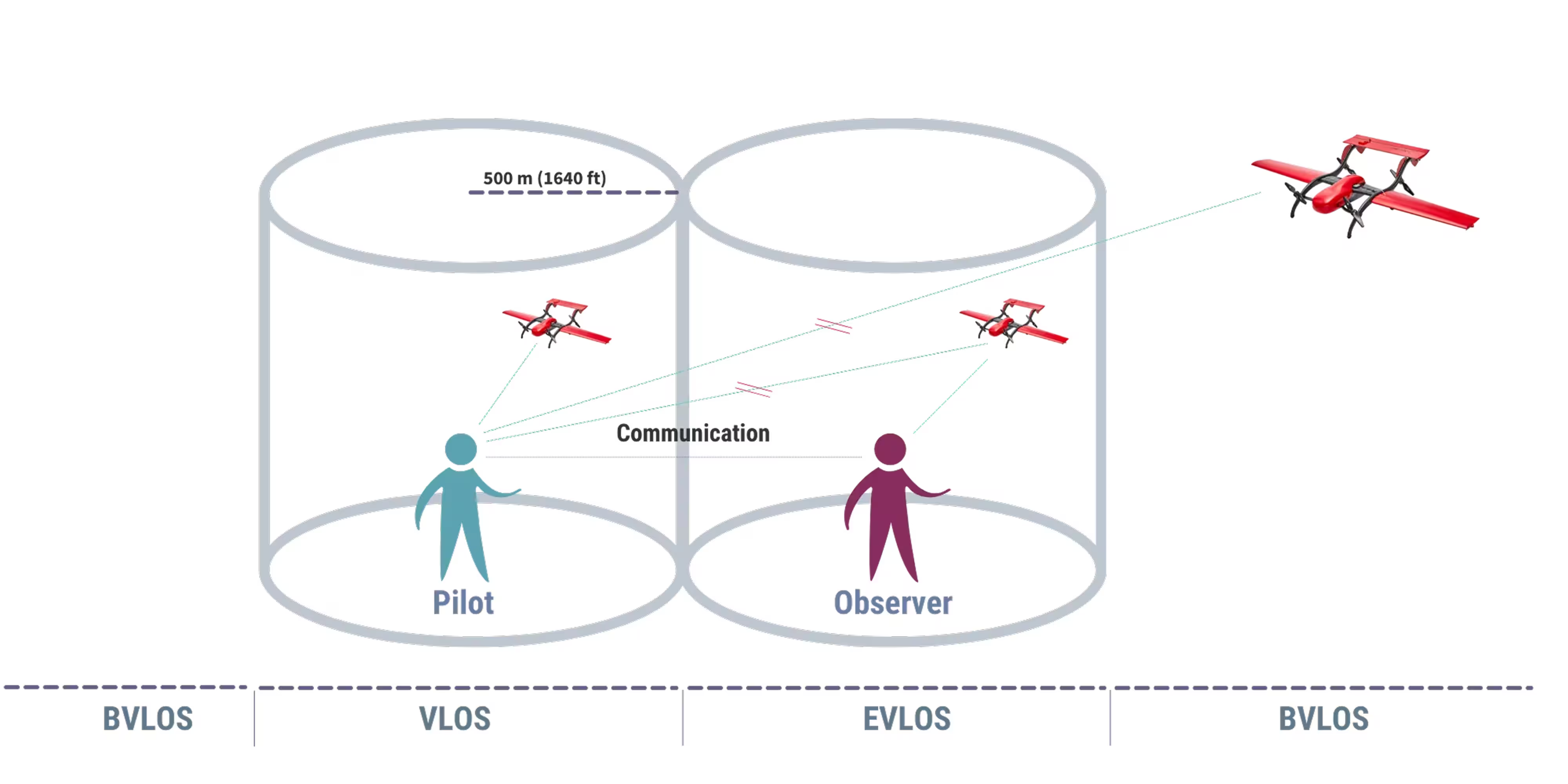

While manual control might initially feel empowering, much like the manual transmission on a 1969 Mach-1 Ford Mustang, this is more a point of aesthetics as it largely provides a direct connection between operator and machine. As missions increased in complexity, distances expanded, and terrain became less predictable, that same control began to introduce friction instead of confidence. When that happens, an operator no longer enhances a system but rather becomes a limitation.

If the pilot becomes a bottleneck, the C2 link is valuable. But when the C2 link fails, the entire mission plan must remain available to the onboard controller. At this point, we witness the true evolution of autopilot. When the C2 link fails, the autopilot can continue the mission, but only if the mission was preplanned. Is it autonomous or automatic?

As an example, at FIXAR (a full-stack UAV technology company), the autopilot is treated as a core component rather than an add-on. The autopilot defines how the aircraft behaves under real-world conditions, with the goal of simplifying operations and maintaining reliability in demanding environments. Because real missions rarely unfold under ideal conditions, the system must do more than execute commands. A truly functioning system needs to maintain stable flight, adapt to environmental changes, and keep the aircraft within a safe operating envelope even as conditions degrade. It must also make informed decisions to maintain mission integrity and adjust in real time to improve the likelihood of success. This is where true autonomy comes into play.

{{How UAVs learned 5="/custom-photos-elem"}}

Maximized autonomy

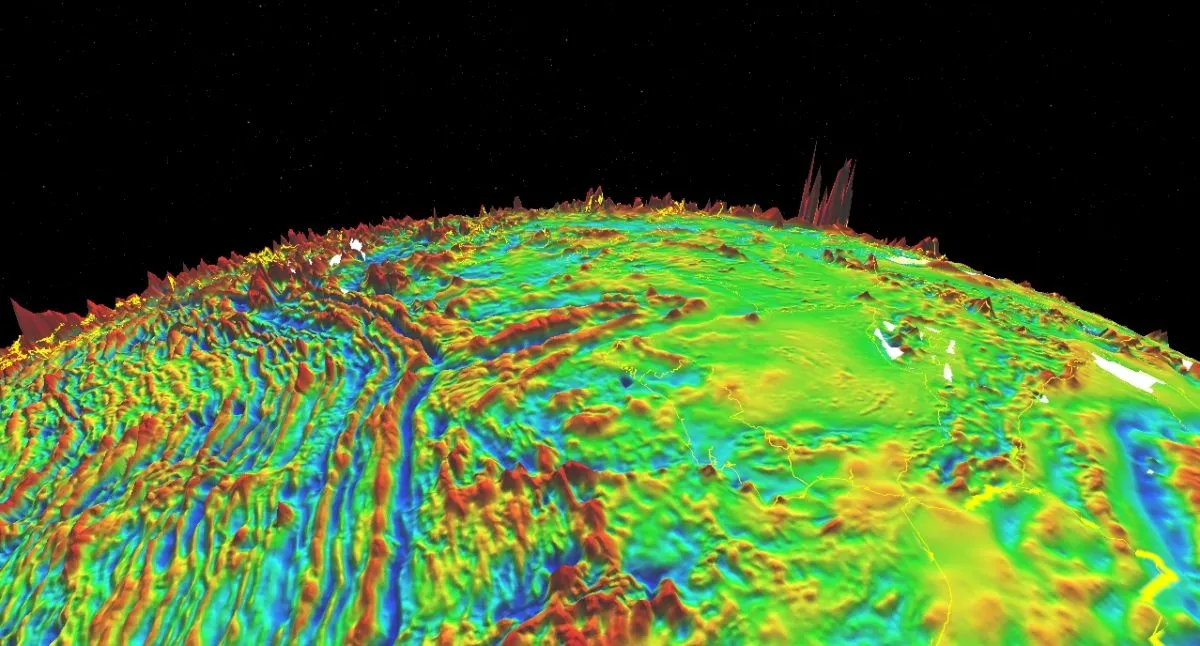

The most challenging scenarios emerge when navigation systems begin to fail. Many UAV platforms rely heavily on GNSS, and when that signal degrades or disappears, their functionality declines rapidly, often defaulting to return-to-home behavior based on outdated assumptions. While this might rescue the aircraft, it places mission success as secondary.

FIXAR addresses this limitation by incorporating navigation logic designed for degraded conditions. Through ThermalFlow, a computer-vision-based navigation system, the aircraft tracks movement relative to the ground by identifying visual reference points, preserving their spatial relationships, and measuring how they shift over time. This approach allows the system to maintain a continuous estimate of motion even when satellite positioning is unreliable, enabling the aircraft to operate with a level of resilience that simpler systems cannot match. This approach places a priority on overall mission success.

With a real-time surface track-and-follow function that allows flights to be carried out without detailed terrain data, even in challenging environments, the system enables dynamic adaptation and correction to changing conditions, acts predictably, and completes the mission successfully. This is where the path to true flight autonomy really begins.

Because the real test of autonomy isn’t just when everything works. It’s when it doesn’t.

{{How UAVs learned 6="/custom-photos-elem"}}

So, what happens when a ground-control station is no longer needed? In the next article, we’ll explore how close we are to handling the toughest missions with nothing more than a single command. And everything that follows after.